Tree of Thoughts (ToT) 🌳

Explore a powerful framework that generalizes over Chain-of-Thought, enabling LLMs to perform deliberate reasoning with lookahead and backtracking.

This content is adapted from Prompting Guide: Tree of Thoughts. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

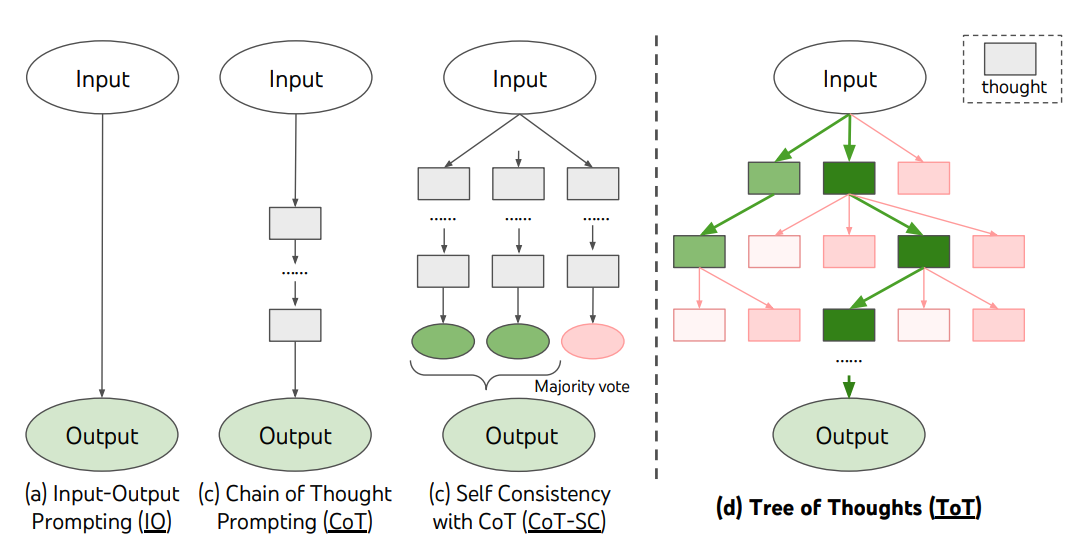

For complex tasks that require strategic exploration or lookahead, traditional prompting techniques often fall short. Tree of Thoughts (ToT), proposed by Yao et al. (2023) (opens in a new tab) and Long (2023) (opens in a new tab), is a framework that encourages exploration over "thoughts" that serve as intermediate steps for problem-solving.

How it Works

ToT maintains a tree of thoughts, where each node represents a coherent language sequence serving as an intermediate step. This allows the model to:

- Self-Evaluate: The LLM assesses its own progress through these intermediate steps.

- Search Regionally: Combined with algorithms like BFS (Breadth-First Search) or DFS (Depth-First Search), the system can systematically explore different branches, look ahead, or backtrack when it hits a dead end.

Image Source: Yao et el. (2023)

Image Source: Yao et el. (2023)

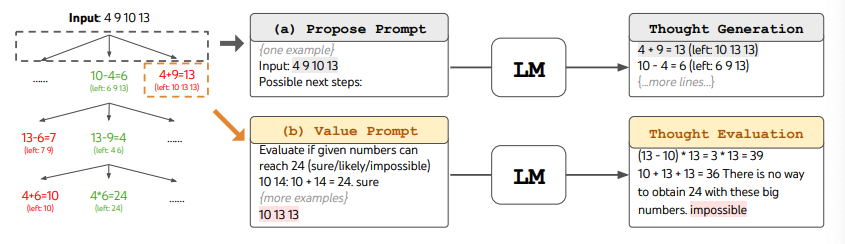

The "Game of 24" Benchmark

A classic demonstration of ToT is the Game of 24, a mathematical puzzle where the goal is to use four numbers and basic arithmetic to reach 24.

While standard Chain-of-Thought (CoT) often fails this task, ToT succeeds by decomposing the problem into 3 steps. At each step, the model evaluates potential candidates as "sure / maybe / impossible" and only proceeds with the most promising "maybe" or "sure" paths.

Image Source: Yao et el. (2023)

Image Source: Yao et el. (2023)

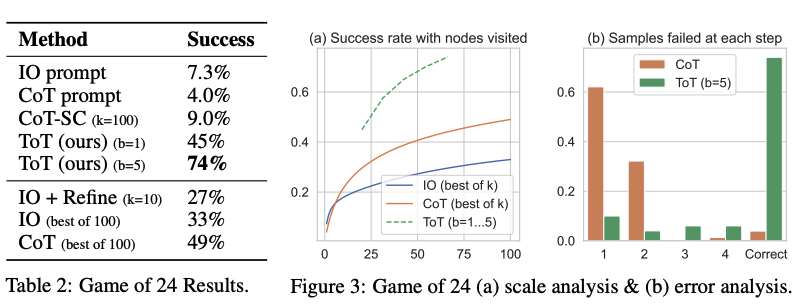

Performance Impact

As shown in the researchers' results, ToT substantially outperforms standard prompting and CoT in complex reasoning benchmarks:

Image Source: Yao et el. (2023)

Image Source: Yao et el. (2023)

Single-Prompt Alternative: Three Experts

For those who don't want to implement a full tree-search infrastructure, Hulbert (2023) (opens in a new tab) proposed a clever "single-prompt" version that mimics the ToT process:

Sample ToT Prompt:

"Imagine three different experts are answering this question. All experts will write down 1 step of their thinking, then share it with the group. Then all experts will go on to the next step, etc. If any expert realizes they're wrong at any point then they leave. The question is..."

This "mental committee" approach forces the model to simulate the evaluation and elimination process within a single generation.

[!TIP] Tree of Thoughts represents the peak of deliberate reasoning. For tasks involving real-time interaction with external tools, the ReAct Framework is the next logical step.