ReAct Prompting (Reasoning and Acting) 🎭

Explore the framework that enables LLMs to interleave reasoning traces and task-specific actions, allowing them to use external tools and knowledge bases for more reliable and factual responses.

This content is adapted from Prompting Guide: ReAct. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

Chain-of-Thought (CoT) prompting has shown the capabilities of LLMs to carry out reasoning traces. However, its lack of access to the external world can lead to fact hallucinations and error propagation.

ReAct, proposed by Yao et al. (2022) (opens in a new tab), is a general paradigm that combines reasoning and acting with LLMs. It prompts LLMs to generate verbal reasoning traces and actions for a task in an interleaved manner.

How it Works

ReAct is inspired by the synergies between "acting" and "reasoning" that allow humans to learn new tasks and make decisions.

The framework allows the system to:

- Induce and Update Plans: Using reasoning traces to track action progress.

- Interface with External Sources: Using action steps to gather information from knowledge bases (e.g., Wikipedia) or tools.

Image Source: Yao et al. (2022)

Image Source: Yao et al. (2022)

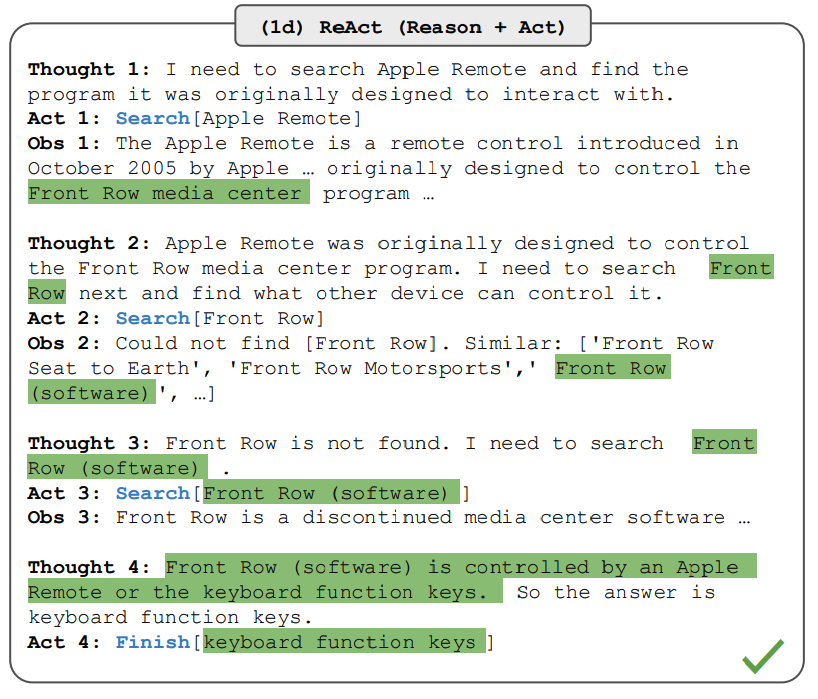

The Thought-Action-Observation Loop

In a typical ReAct trajectory, the model follows a structured cycle:

- Thought: The model's reasoning about the current state.

- Action: The specific tool or search query to execute.

- Observation: The result returned from the external environment.

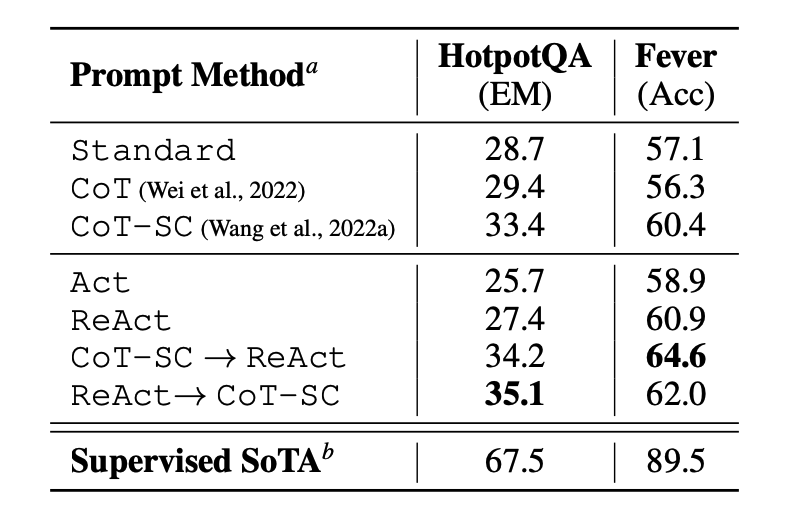

Knowledge-Intensive Tasks

ReAct is particularly effective for question answering (HotPotQA) and fact verification (Fever). By retrieving information to support reasoning, and using reasoning to target what to retrieve next, the model becomes significantly more factual.

Image Source: Yao et al. (2022)

Image Source: Yao et al. (2022)

Example Trajectory (HotPotQA)

Question: Aside from the Apple Remote, what other devices can control the program Apple Remote was originally designed to interact with?

Thought 1: I need to search Apple Remote, find the program it was originally

designed to interact with, and then find other devices that can

control that program.

Action 1: Search[Apple Remote]

Observation 1: The Apple Remote is a remote control... designed to interact

with the Front Row media center program.

Thought 2: The program Apple Remote was designed to interact with is Front Row.

I need to search Front Row and find what other devices can control it.

Action 2: Search[Front Row (software)]

Observation 2: Front Row is a discontinued media center software... it can be

controlled by the Apple Remote or the Keyboard Function keys.

Thought 3: The other devices that can control Front Row are the Keyboard

Function keys. I now know the final answer.

Action 3: Finish[Keyboard Function keys]Decision Making Tasks

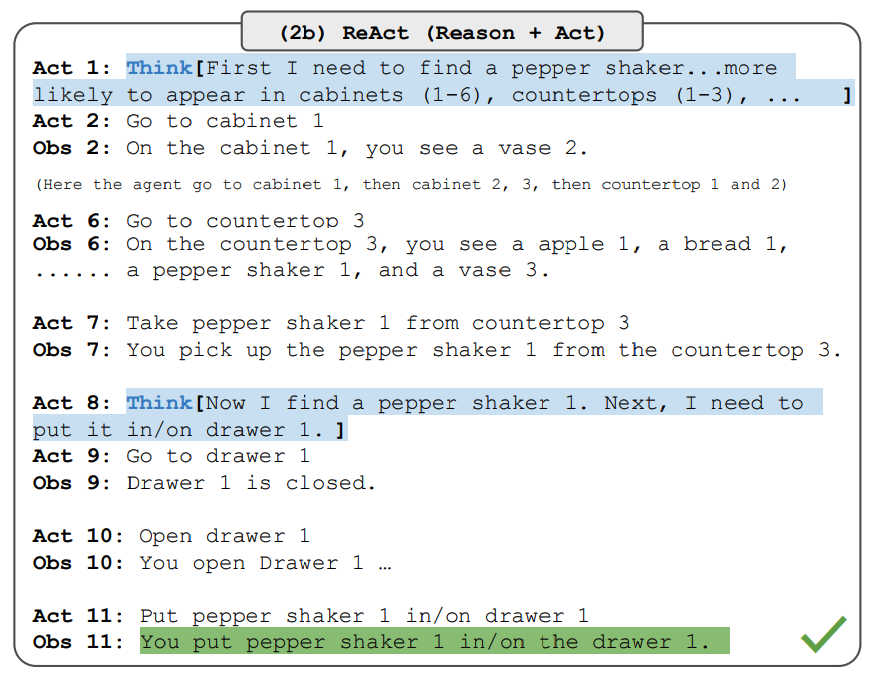

ReAct also excels in interactive environments like ALFWorld (text-based games) and WebShop (online shopping simulations). In these tasks, reasoning helps the model decompose complex goals into manageable subgoals.

Image Source: Yao et al. (2022)

Image Source: Yao et al. (2022)

Implementing a ReAct Agent with LangChain

Modern libraries like LangChain have built-in support for the ReAct framework, allowing you to create autonomous agents with just a few lines of code.

Setup and Configuration

from langchain.llms import OpenAI

from langchain.agents import load_tools, initialize_agent

llm = OpenAI(model_name="text-davinci-003", temperature=0)

tools = load_tools(["google-serper", "llm-math"], llm=llm)

agent = initialize_agent(tools, llm, agent="zero-shot-react-description", verbose=True)Running the Agent

When you run a complex query like: "Who is Olivia Wilde's boyfriend? What is his current age raised to the 0.23 power?", the agent enters the ReAct loop:

> Entering new AgentExecutor chain...

Thought: I need to find out who Olivia Wilde's boyfriend is and then calculate his age.

Action: Search

Action Input: "Olivia Wilde boyfriend"

Observation: Olivia Wilde started dating Harry Styles...

Thought: I need to find out Harry Styles' age.

Action: Search

Action Input: "Harry Styles age"

Observation: 29 years

Thought: I need to calculate 29 raised to the 0.23 power.

Action: Calculator

Action Input: 29^0.23

Observation: Answer: 2.169459462491557

Final Answer: Harry Styles is 29 years old and his age raised to the 0.23 power is 2.169...ReAct + CoT: The authors found that the best approach often involves combining ReAct with Chain-of-Thought (CoT), allowing the model to use both internal knowledge and external information simultaneously.

[!IMPORTANT] While powerful, ReAct depends heavily on the quality of retrieved information. Non-informative search results can derail the model's reasoning. Always ensure your tools provide clean, relevant data.