Prompt Chaining 🔗

Learn how to break complex tasks into smaller, manageable subtasks by chaining multiple prompts together for higher reliability and transparency.

This content is adapted from Prompting Guide: Prompt Chaining. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

To improve the reliability and performance of LLMs, one of the most effective techniques is Prompt Chaining. Instead of using one massive, detailed prompt, you break the task into smaller subtasks where the output of one prompt becomes the input for the next.

Why Chain Prompts?

- Complexity Management: Splitting tasks makes them easier for the LLM to process without losing track of instructions.

- Transparency: You can see exactly where a chain fails, making debugging much simpler.

- Controllability: You can apply transformations or filters between steps to ensure the output stays on track.

- Reliability: Smaller, focused tasks have a lower chance of hallucination compared to a single complex "one-shot" task.

Use Case: Document QA

A common use case for prompt chaining is answering questions based on large documents. Rather than asking the model to "Read this and answer X," we create a two-step chain.

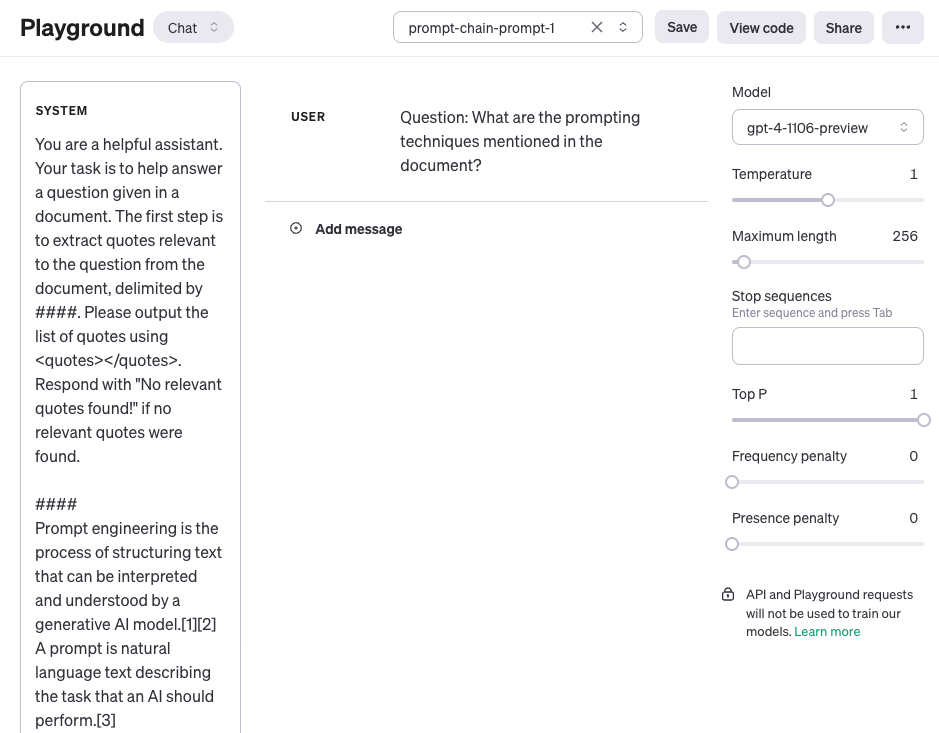

Step 1: Quote Extraction

The first prompt focuses purely on identifying and extracting relevant segments from the raw document.

Prompt 1:

"Your task is to extract quotes relevant to the question from the document below. Output the list of quotes using <quotes></quotes> tags."

Step 2: Answer Generation

The second prompt takes the original document plus the extracted quotes to formulate the final, user-facing answer.

Prompt 2:

"Given these <quotes> and the original document, please compose a friendly and accurate answer to the user's question."

Performance and Personalization

Prompt chaining is particularly powerful when building Conversational Assistants. By chaining prompts, you can:

- Classify the user's intent.

- Retrieve the relevant knowledge.

- Rewrite the response in a specific brand voice or personalized tone.

Token Latency: Keep in mind that every step in a chain requires a new API call, which can increase total latency. Use chaining for tasks where accuracy is more critical than near-instant response times.

[!TIP] Prompt chaining is often the foundation for building AI Agents. For more automated reasoning, check out the ReAct framework next.