Automatic Prompt Engineer (APE) 🤖

Discover how to frame prompt engineering as a black-box optimization problem, using LLMs to automatically generate, search, and select the most effective task instructions.

This content is adapted from Prompting Guide: APE. It has been curated and organized for educational purposes on this portfolio. No copyright infringement is intended.

Introduction

As prompt engineering becomes more complex, manual trial-and-error can be inefficient. Automatic Prompt Engineer (APE), proposed by Zhou et al. (2022) (opens in a new tab), is a framework for automatic instruction generation and selection.

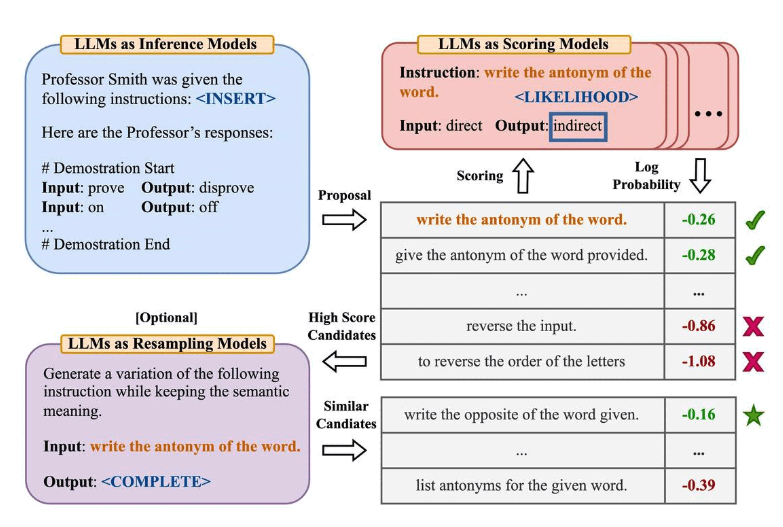

Image Source: Zhou et al. (2022)

Image Source: Zhou et al. (2022)

How APE Works

APE treats the instruction generation problem as natural language synthesis, addressed as a black-box optimization problem:

- Instruction Generation: A large language model (acting as an inference model) is given input-output demonstrations to generate multiple candidate instructions for a task.

- Instruction Execution: These candidate instructions are executed using a target model.

- Selection: The most effective instruction is selected based on computed evaluation scores (e.g., accuracy on a validation set).

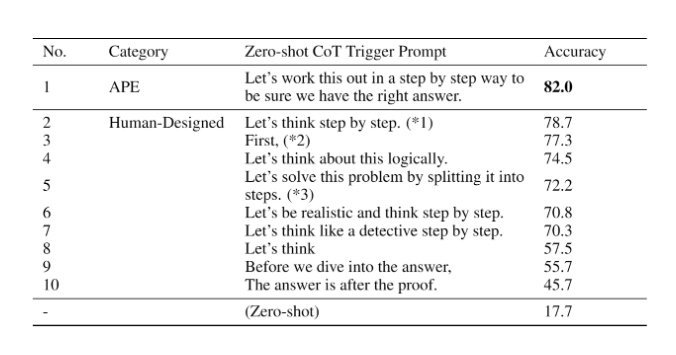

Beating the Human Baseline

One of APE's most famous achievements was discovering a better zero-shot Chain-of-Thought (CoT) prompt than the human-engineered classic: "Let's think step by step."

The APE-discovered prompt—"Let's work this out in a step by step way to be sure we have the right answer."—significantly improved performance on the MultiArith and GSM8K benchmarks.

Image Source: Zhou et al. (2022)

Image Source: Zhou et al. (2022)

Beyond APE: The World of Automated Prompting

APE is just the beginning of automated prompt optimization. If you're interested in going deeper into this field, here are the key research milestones:

- OPRO (opens in a new tab): Introduces the idea of letting LLMs "Take a deep breath" to improve math performance.

- Prompt-OIRL (opens in a new tab): Uses offline inverse reinforcement learning to generate query-dependent prompts.

- AutoPrompt (opens in a new tab): Employs gradient-guided search to create task-specific prompts.

- Prefix Tuning (opens in a new tab) & Prompt Tuning (opens in a new tab): Lightweight alternatives to fine-tuning that learn "soft prompts" through backpropagation.

[!TIP] Automated prompt engineering is transforming how we build production systems. By moving from manual "vibes-based" prompting to data-driven optimization, we can achieve higher reliability and performance at scale.